Pandora's box is a metaphor for endless complications or trouble arising from a single, simple miscalculation. Set in ancient Greek mythology, Pandora's story derives from the epic poems by Hesiod. Written during the 7th century BC, the poems relate how the gods created Pandora and how Zeus's gift ultimately ended mankind's Golden Age. Could artificial intelligence (AI) be the Pandora's Box of our time?

OpenAI's Chat Generative Pre-trained Transformer (ChatGPT) and other artificial intelligence (AI)-based text generative software have dominated the limelight for the last 12 months. Their ready availability has been met with both delight and annoyance by users globally. Early experiences suggested that outputs were flawed and, in some cases, wrong. As user experience has grown, the propensity of AI to hallucinate has led many to conclude that it would not be possible to integrate it into research or medical fields. Despite the concerns, there has been considerable interest in its use for clinical diagnostics and decision-making, community outreach activities, and supporting medical writing [1][2].

Various arguments extol the potential benefits AI offers to medical writing, though research so far has focused mainly on grant applications, where the potential for it to affect the scientific literature is limited [3]. However, people overestimate the capability of AI, which causes many to overlook its limitations [4]. The International Committee of Medical Journal Editors (ICMJE) and the World Association of Medical Editors (WAME) recently commented on AI's limitations and issued recommendations for its use in scientific writing and peer review [5][6]. Leading concerns associated with the undisciplined use of AI include hallucination, lack of context, unapproved data sharing, biased reporting, and risk of plagiarism [1][2][5][7].

Despite the concerns, many working in science are employing tools like ChatGPT when working on their scientific publications and in other communications. A straw poll conducted at the recent annual conference of the Pharmaceutical Contract Management Group showed that even those working in the highly regulated pharmaceutical industry have not been able to resist the temptation of ChatGPT. One must assume that users succumbed to temptation in direct contravention of their company operating policies, which tend to address concerns about protecting confidential information [8].

Most users claim enthusiastically that AI improves their workflow, and its ready accessibility has been welcomed by the scientific community [1][2]. ChatGPT can generate lists of relevant data sources, identify keywords, and map out potential search fields—contributions that can help in writing manuscript backgrounds, introductions, and even discussions. Perhaps more controversially, large language models (LLMs) can generate outlines with increasing detail, potentially improving language and grammar beyond the author's skills (particularly for those whose native language is not English) [9][10]. It has even been suggested that AI can provide rapid 'reviews' of early drafts. In a study evaluating ChatGPT's performance as a language editor and writing coach [9], the software performed well as a language editor, identifying 5–14 edits per paragraph. However, it was inconsistent as a writing coach, often altering the meaning of sentences or using technical terms inaccurately.

This week, the journal Nature reported on how a group of scientists created an LLM that performs tasks from reading the literature to writing and reviewing its own papers. However, the authors concluded that the output is not earth-shattering so far, and the system can only conduct research within the field of machine learning itself [11].

For many, writing manuscripts is one of the most time-consuming and difficult components of the research process, time many authors feel could be better spent generating or analysing data to augment their research efforts [5]. However justified, benefits in one aspect of the scientific discipline can lead users to overlook limitations elsewhere. For example, writing and publishing case histories are seen as a rite of passage for promising young clinicians [12]. ChatGPT has been used to evaluate case studies. Rather than generating the review per se, the system weighed the patterns and results of peer-reviewed case studies [13]. The case reports received mixed ratings from peer reviewers, with 33.3% of professionals recommending rejection. The reports' overall merit score was 4.9±1.8 out of 10. Ultimately, the system was more accurate in generating text than in analysing the results of published articles.

Purists might argue that the ability to communicate science clearly is a key skill scientists must master to progress in their careers. Will 'opening the box' of ChatGPT and its ilk produce a generation of less qualified and lazy scientists? Will over-reliance on technology reduce creative and critical thinking, impacting the ability to make independent judgments about writing quality? Researchers ran an experiment in which one group of consultants worked with AI assistance and another group followed standard practices. As might be expected, the AI-assisted group outperformed the non-AI group in almost every performance measure. However, the AI-assisted group also tended to over-rely on computer systems, introducing the possibility of errors slipping into their work [14]. Using AI clearly produces sloppy thinkers (who can be rather annoying in eagerly sharing that programs like ChatGPT are making them faster and smarter).

One of the more interesting applications of AI is for medical interactions with the general public. Since the inception of the internet, the public has searched for symptoms on Yahoo (1990s), Altavista (1995), and for the last 20 years, Google, desperately seeking diagnoses and treatment advice. In many cases, that advice was almost certainly misplaced, and in many cases, it was potentially harmful. Yet, increasing numbers of patients turn to the internet for information, about 80% of adults in the US reportedly use the internet to seek health guidance [15]. Enter ChatGPT as a means of synthesizing medical advice for websites. One such example is WebMD. Increasing numbers of websites are using LLMs like ChatGPT as online 'clinical assistants,' providing patient support services [13].

The current literature strongly implies that the accuracy and relevance of ChatGPT outputs depend on the quality of the prompts used [7][8]. This may point to another chink in ChatGPT's armour. The rise of voice searches and their dominance in the search engine landscape cannot be ignored, by next year (2025), it is predicted that 50% of all searches will be voice-based. The numbers of smartphones, smart speakers, wearables, and other gadgets are rising. They provide the convenience modern consumers crave. Smartphones put the world at our fingertips. People have questions that need answering; however, unlike written searches, verbal searches tend to be generated 'on-the-fly,' in people's heads, which markedly reduces prompting quality.

Considering the importance of sourcing accurate information for conditions like cancer, determining the veracity of AI (mis)information outputs from chat platforms such as ChatGPT is key not only for patients but also for clinicians and other health stakeholders. There are exceptions where AI is considered to perform well, such as the National Cancer Institute's "Common Cancer Myths and Misconceptions" web page. Study findings suggest that not only is the supporting AI effective at generating medical advice, but it also provides accurate information [16]. The authors concluded that the information provided was consistent and accurate and given in a format that could be readily understood. The study also observed that the information lacked any potentially harmful content and, most importantly, no aspect of the system appeared susceptible to misinformation. It is clear that solutions could be found where AI could be successfully employed for healthcare communications.

As with the story of Pandora, there is always hope. Beyond medical communication sciences, AI computer systems are being used extensively in medical research. The most common roles for AI include clinical decision support and image analysis [5][6]. These systems are specific to their tasks, such as providing treatment guidelines based on interpreting key data. In these cases, with careful training on the right data, it is possible for AI to eventually become mentor, mediator, and master of its specific discipline. Even in these specific circumstances, the overwhelming belief in AI's superiority may bias our opinion, even among regulatory authorities. A review of 521 US FDA device authorizations showed that 144 were labelled as "retrospectively validated," 148 were "prospectively validated," and only 22 were validated using data from randomized controlled trials. Most notably, 226 of 521 FDA-approved medical devices (approximately 43%) lacked published clinical validation data [17].

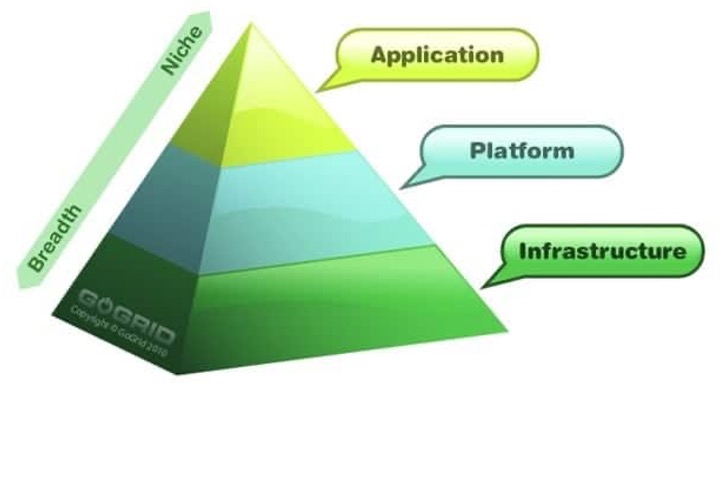

Any snapshot of AI's performance today will be out of date tomorrow: development is progressing rapidly across various dimensions, including advancements in algorithms, hardware, and applications. The availability of data for 'analysis,' both new and old, is also accelerating. Be prepared for:

- Algorithmic Improvements:New architectures, such as transformers, have revolutionized natural language processing and computer vision. Models like GPT-3 and its successors have demonstrated significant improvements in understanding and generating human-like text.

- Increased Computational Power:The availability of more powerful hardware, including graphics and tensor processing units, has accelerated training times for complex models. This has enabled researchers to experiment with larger datasets and more intricate architectures.

- Data Availability:The explosion of digital data has fuelled the training of AI models. This includes increasing availability of text, images, and structured data, allowing for more robust and capable AI systems. Unstructured data availability is also not a million miles away.

- Research and Investment:There has been a surge in research output and funding for AI initiatives. Governments, universities, and private companies are investing heavily in AI research, expect continuing breakthroughs and innovations.

- Public Awareness and Ethical Considerations:As AI technologies become more prevalent, discussions around ethics, bias, and regulation are intensifying, possibly shaping the direction of future AI development.

We are starting to see the first reports where AI systems' performance surpasses that of medical practitioners. LLMs are establishing footholds in a broad range of medical settings. It was originally envisaged that AI tools would supplement rather than replace practitioners and that early-career scientists would receive effective training in appropriate ways to use these tools [3][6]. The outputs are improving constantly, and usage is consistently expanding the ubiquity of AI. Its march toward singularity (the point where technological growth becomes unstoppable and irreversible) seems almost inevitable. As one pundit on AI's societal impact observed, "The best course of action is to embrace it, use its capabilities to improve our lives, and foster mutually beneficial relationships by evolving it in clinical medicine [18]."

In closing, AI offers the potential to enhance patient treatment and research, but not without concerns over accuracy, authorship, and bias. Its responses to user-based medical questions are inherently limited by how questions are posed and the data the AI uses to inform its responses, often resulting in vague answers [18]. Any offered 'opinion' will be based only on the data available to it, so it can reach erroneous conclusions when newer data has not yet been integrated. It also makes assessments in isolation, meaning it is not influenced by clinical consensus (though this is not always correct, either).

As with the story of Pandora, AI was created to beguile us with its temptation of infinite knowledge but brings the potential to introduce falsehood, treachery, and disobedience. Effectively, the troubles are already out of the box. All we have left is hope, and if you read the poems of Hesiod, you will appreciate the folly of trusting in that illusion.

References

- Huang J, Tan M. The role of ChatGPT in scientific communication: writing better scientific review articles. Am J Cancer Res. 2023;13(4):1148-1154.

- Chandra A, Dasgupta S. Impact of ChatGPT on medical research article writing and publication. Sultan Qaboos Univ Med J. 2023;23(4):429-432.

- Seckel E, Stephens BY, Rodriguez F. Ten simple rules to leverage large language models for getting grants. PLoS Comput Biol. 2024;20(3):e1011863.

- Stokel-Walker C. Many people think AI is already sentient - and that's a big problem. New Scientist. 18 July 2024:17.

- Kacena MA, Plotkin LI, Fehrenbacher JC. The use of artificial intelligence in writing scientific review articles. Curr Osteoporos Rep. 2024;22(1):115-121.

- Haver HL, Ambinder EB, Bahl M, Oluyemi ET, Jeudy J, Yi PH. Appropriateness of breast cancer prevention and screening recommendations provided by ChatGPT. Radiology. 2023;307(4):e230424.

- Artificial intelligence in medical writing: An Insider's Insight. Niche Science & Technology. 2024.

- Hardman TC. Love: An AI's inquiry into the essence of human connection. LinkedIn. 2024.

- Lingard L, et al. Will ChatGPT's free language editing service level the playing field in science communication?: Insights from a collaborative project with non-native English scholars. Perspect Med Educ. 2023;12:565-574.

- Amano T, et al. The manifold costs of being a non-native English speaker in science. PLOS Biol. 2023;21:e3002184.

- Castelvecchi D. Researchers built an 'AI Scientist' --- what can it do? Nature. 2024.

- Niche Science & Technology Ltd. (2023) Cracking The Case: An Insider's Insight into Case Reports.

- Kadi G, Aslaner MA. Exploring ChatGPT's abilities in medical article writing and peer review. Croat Med J. 2024;65:93-100.

- Dell'Acqua F, et al. Navigating the jagged technological frontier: Field experimental evidence of the effects of AI on knowledge worker productivity and quality. *Working Paper 24-013*. Harvard Business School; 2023.

- Calixte R, et al. Social and demographic patterns of health-related internet use among adults in the United States: A secondary data analysis of the health information national trends survey. Int J Environ Res Public Health. 2020;17:6856.

- Johnson SB, et al. Using ChatGPT to evaluate cancer myths and misconceptions: artificial intelligence and cancer information. JNCI Cancer Spectr. 2023;7:pkad015.

- El Fassi CS, et al. Not all AI health tools with regulatory authorization are clinically validated. Nat Med. 2024

- Xue VW, et al. The potential impact of ChatGPT in clinical and translational medicine. Clin Transl Med. 2023;13:e1216.