Innovation in health communications is often misunderstood as a synonym for technology. Yet, innovation is about one thing: connection… finding novel ways to get people the information they need in ways that resonate. In this sense, innovation is a strategic, ethical, and human‑centred endeavour, one that redefines how scientific knowledge is translated, disseminated, and acted upon.

Traditional communication models, linear, document‑heavy, and slow, are increasingly misaligned with the realities of modern healthcare. Scientific knowledge is expanding at unprecedented speed, digital platforms are reshaping how people seek information, and patients are more empowered than ever. Meanwhile, misinformation proliferates across social channels and AI‑mediated environments, creating new challenges for trust and comprehension.

This shift demands a move toward audience‑centred, digitally mediated, and intent‑driven communication. Intent‑driven scientific communication, as described previously [1], emphasises an understanding of why audiences seek information, not merely what they ask [1]. It reframes communication as a dynamic, context‑aware process that aligns scientific content with user motivations, cognitive load, and decision‑making needs.

Innovation, therefore, is not merely about adopting new tools. It encompasses new ways of listening, designing, validating, and engaging, ensuring that communication remains meaningful, equitable, and scientifically robust.

The AI Content Explosion: Quantity Without Guaranteed Quality

Generative AI has transformed the production of health‑related content. Large language models can now generate articles, summaries, patient materials, and even scientific‑style narratives in seconds. This capability offers enormous potential for scaling communication, but it also introduces profound risks.

AI systems can produce hallucinated information, fabricate citations, or generate plausible‑sounding but incorrect scientific claims [2]. Without rigorous human oversight, these outputs may undermine trust, particularly in high‑stakes domains such as medicine. We should be aware and afraid that people are increasingly turning to social platforms and AI to ask questions about health and medicines, highlighting the urgency of ensuring accuracy and balance.

Other risks frequently raised in the post-AI era include:

- Lack of source transparency: Many AI systems do not reveal where information originates, complicating verification.

- Variable scientific quality: Outputs may mix high‑quality evidence with outdated or low‑value material.

- Algorithmic bias: Models trained on skewed datasets may perpetuate inequities in representation or recommendations [3].

- Overproduction of low‑value content: The ease of generation can flood digital ecosystems with redundant or shallow material, diluting attention and obscuring high‑quality insights.

The obvious result is information abundance but epistemic uncertainty, a landscape where more content certainly does NOT equate to more knowledge. For healthcare professionals and patients alike, distinguishing credible data sources and insights from gibberish (unqualified content) and noise (paraphrasing) becomes increasingly difficult.

To navigate this environment, communicators must pair AI‑enabled scale with rigorous validation frameworks, transparent sourcing, and human‑in‑the‑loop governance. Innovation must enhance, not erode, trust.

Veracity, Validity, and the Search for True Value

In health communications, veracity and evidence validity are non‑negotiable. The challenge is no longer access to information but the ability to identify what is credible, relevant, and actionable. Emerging AI‑assisted discovery tools are reshaping this landscape. Semantic search engines, retrieval‑augmented generation (RAG) systems, and voice‑enabled search interfaces allow users to navigate scientific knowledge more intuitively. Instead of relying on keyword matching, these systems interpret meaning, context, and intent: aligning with our framework’s emphasis on understanding the user’s underlying purpose [1].

This evolution transforms search from a static retrieval process into intent‑aware knowledge navigation. For example:

- Semantic search identifies conceptually related evidence, even when terminology differs.

- RAG systems ground AI outputs in verified sources, reducing hallucination risk [4].

- Voice‑enabled search supports accessibility and real‑time clinical use.

For communicators, this shift reinforces the need to design content that is structured, machine‑readable, and semantically rich. High‑value insights must be easy to access for both humans and algorithms to find, interpret, and trust. Innovation should augment knowledge and understanding, not merely introduce novelty. This aligns directly with the pursuit of veracity: innovation must elevate clarity, accuracy, and scientific integrity.

Transforming Scientific Data into Multimedia Experiences

One of the most transformative trends in health communications is the shift from text‑dominant formats to multimedia, multimodal experiences. Advances in digital tools and AI have dramatically lowered the barriers to producing:

- high‑quality animations,

- explainer videos,

- interactive dashboards,

- immersive storytelling environments,

- podcasts and audio summaries.

Previously, these formats required specialised production teams and significant budgets. Today, AI‑assisted platforms can generate storyboards, voiceovers, visualisations, and even 3D models at a fraction of the time and cost, effectively democratising dissemination.

Multimedia formats enhance:

- Training: Interactive modules improve retention and engagement among healthcare professionals [5].

- Public engagement: Visual storytelling helps demystify complex scientific concepts.

- Scientific dissemination: Dynamic figures and animations can convey mechanisms of action or trial designs more effectively than static diagrams.

- Internal capability building: Teams can rapidly prototype and iterate communication assets.

Evidence from cognitive psychology shows that dual coding, combining verbal and visual information, significantly improves comprehension and recall [6]. Similarly, narrative‑driven multimedia supports emotional engagement, which is essential for behaviour change and patient empowerment.

Learning Science and the Multimodal/Dimensional Omnichannel Approach

Modern healthcare audiences are made up of clinicians, patients, policymakers, and industry professionals, all learning in fragmented, high‑pressure environments. Effective communication must therefore align with evidence‑based learning principles, including:

- Spaced repetition: Reinforcing key messages over time improves long‑term retention [7].

- Active recall: Engaging users in retrieval strengthens memory consolidation.

- Interleaving: Mixing topics enhances conceptual understanding.

- Dual coding: Combining text and visuals improves comprehension.

- Retrieval practice: Testing knowledge improves learning outcomes.

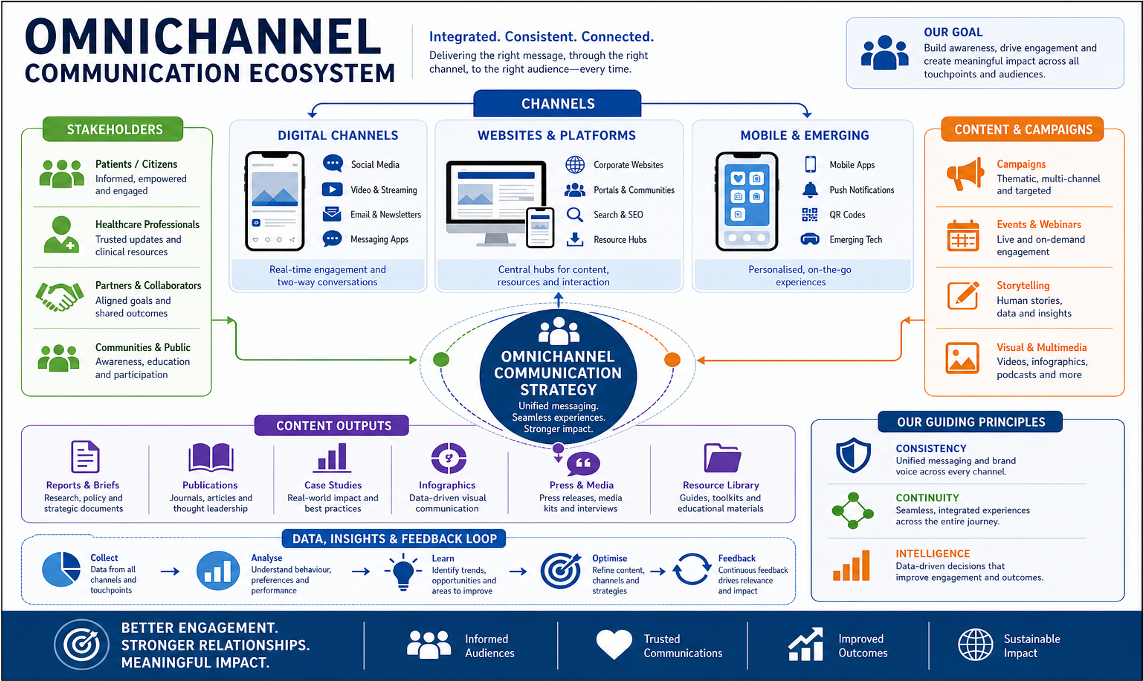

Multimodal/dimensional approaches integrate text, visuals, audio, interactivity, and social engagement into a cohesive learning ecosystems. This approach mirrors how people naturally consume information across platforms. The omnichannel model extends this further by ensuring that messages are consistent, adaptive, and reinforced across contexts, scientific meetings, social media, medical education platforms, field medical interactions, and AI‑mediated search environments.

Adaptive learning systems, powered by AI, can personalise content based on user behaviour, knowledge gaps, and intent. This aligns with tour proposed framework’s emphasis on tailoring communication to cognitive and emotional needs [1].

Innovation in learning science is therefore not about producing more content, but about designing smarter, more resonant, and more personalised learning journeys.

Additional Critical Considerations for the Future

We still have some way to go. Beyond technology and learning science, several structural considerations will shape the future of health communications:

- Ethical governance of AI: Clear frameworks are needed to ensure transparency, accountability, and fairness in AI‑generated content [8].

- Accessibility and inclusivity: Communication must address diverse literacy levels, cultural contexts, and accessibility needs. The uploaded document’s emphasis on underserved communities underscores this priority.

- Combating misinformation: Collaborative, cross‑sector strategies are essential to counteract health misinformation, particularly in AI‑mediated environments [9].

- Regulatory compliance: As AI‑generated content proliferates, regulators are developing new guidelines for accuracy, attribution, and risk mitigation.

- Digital literacy: Both healthcare professionals and patients require support to navigate increasingly complex digital ecosystems.

- Measuring impact beyond vanity metrics: Engagement must be evaluated through behavioural, educational, and clinical outcomes—not merely impressions or clicks.

- Sustainability of innovation efforts: Innovation must be embedded into organisational culture, not treated as a one‑off initiative.

Conclusion: Innovation With Purpose

Innovation in health communications is not about chasing novelty. It is about purposeful, evidence‑based, human‑centred progress. But with these new technologies the future is full of potential. It is time for us to experiment to find what works best for us as humans and as individuals. The future will belong to communicators who balance:

- speed with scientific rigour,

- creativity with credibility,

- technology with trust,

- and scale with meaningful connection.

By embracing intent‑driven strategies, multimedia storytelling, learning science, and ethical AI governance, the field can evolve toward a more inclusive, impactful, and resilient model of scientific communication.

Innovation is not a buzzword. It is a responsibility.

References

- Hardman TC (2026) Intent‑Driven Scientific Communication.

- Ji Z, Lee N, Frieske R, et al. Survey of hallucination in natural language generation. ACM Comput Surv. 2023;55(12):1–38.

- Mehrabi N, Morstatter F, Saxena N, et al. A survey on bias and fairness in machine learning. ACM Comput Surv. 2021;54(6):1–35.

- Lewis P, Perez E, Piktus A, et al. Retrieval‑augmented generation for knowledge‑intensive NLP tasks. Adv Neural Inf Process Syst. 2020;33:9459–9474.

- Cook DA, Brydges R, Zendejas B, et al. Mastery learning for health professionals using technology‑enhanced simulation: a systematic review. Acad Med. 2013;88(8):1178–1186.

- Mayer RE. Multimedia Learning. 3rd ed. Cambridge University Press; 2021.

- Cepeda NJ, Pashler H, Vul E, et al. Distributed practice in verbal recall tasks: a review and quantitative synthesis. Psychol Bull. 2006;132(3):354–380.

- Floridi L, Cowls J. A unified framework of five principles for AI in society. Harv Data Sci Rev. 2019;1(1).

- Wang Y, McKee M, Torbica A, et al. Systematic literature review on the spread of health‑related misinformation on social media. Soc Sci Med. 2019;240:112552.